- Better by Design

- Posts

- How much user research?

How much user research?

Five guiding principles and three practical tips for outsized impact

There’s a question I get a lot. And it feels like a point of constant tension within internal teams. How much user research should we be doing?

On one side, there’s the feeling that you should be doing more. This is usually driven by that tiny voice that you're cutting too many corners. Or maybe that tiny voice is your design leader. On the other hand are the pressures of delivery. Trying to hit business KPIs with fixed timelines and resources.

Here are five guiding principles to help you get the most out of user research:

Know what you need to learn.

Start every project with a list of open questions. Keep it visible. It keeps you focused and makes it easier to spot when you've learned enough.Right-size the effort.

Not every decision needs a big study. Sometimes, a few user conversations or a quick test is all it takes to provide clarity or unlock a new idea.Ask what’s at stake.

Invest in learning where the risk is highest. De-risk early so you don’t pay for it later. CTAs are cheap and easy to change. Rebuilding your feature isn’t.Learn before you build.

The best insights come before design or development. Research done too late is just regret with documentation.Make insights last.

Don’t let good research die in a deck. Share it with stakeholders. Reuse it. Build on it. Insights should compound, not disappear.

Tip #1: Timing matters

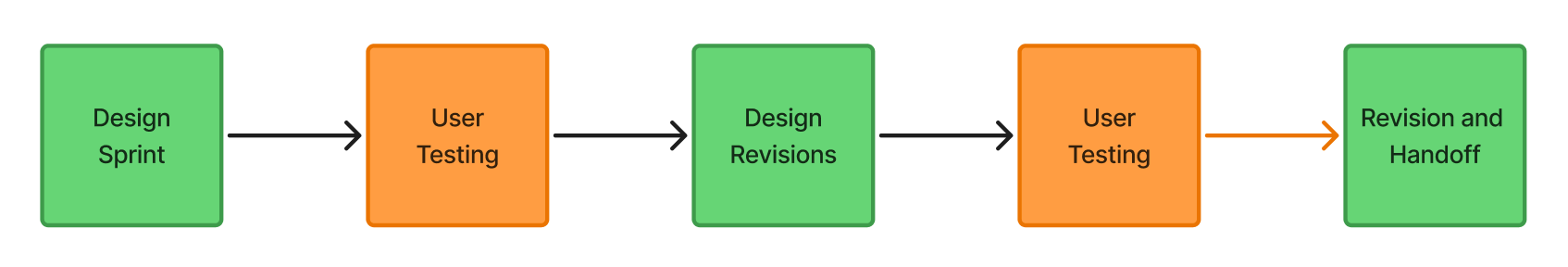

Here are two simple workflows. One with user engagement at the beginning, the other with user engagement throughout. Let’s unpack the big difference and the benefit of one vs the other.

Option A: Design then test

Option A

Engages users after design has occurred, commonly called design or user validation. The goal is to assess if users are agreeable with the proposed design solution. They “validate” the design as having met “conditions of satisfaction” or however you want to label it. This pattern continues until handoff to development.

Option B: Learn then design

Option B

Engages users before the design process, which we at Supergreen call user discovery. The goal is to understand the problem space or current experience, and unpack what works, what doesn’t work, and what would make things better. The next round of research serves the purpose of putting proposed designs in front of the user, unpacking new insights, and creating the foundation for the next design sprint (either redesign or moving to a new feature).

The difference is that Option B enables design to identify opportunities to meaningfully improve the experience. It supports innovation because it is proactive. Option A, on the other hand, is not only reactive, it serves to validate/invalidate internal assumptions rather than expand knowledge of the problem space. The insight it does generate is often left without a place to meaningfully impact the designs. Beginning with user discovery amplifies value creation throughout the entire design process.

Tip #2: How might we…

Research should lead directly into design ideation. A nice way to create efficiency from one function to the other is using How Might We’s…

Consider turning foundational insights into actionable opportunities by developing some How Might We’s as part of research. This serves as a natural starting point for design ideation. It also helps leadership contextualize the insights uncovered and identify potential paths forward. In a sense, it is pulling that voice of the user into the design process. At Supergreen, we like to put it right into the research deck like this:

Overlaying the current product with user-informed How Might We’s

Tip #3: Remove the friction

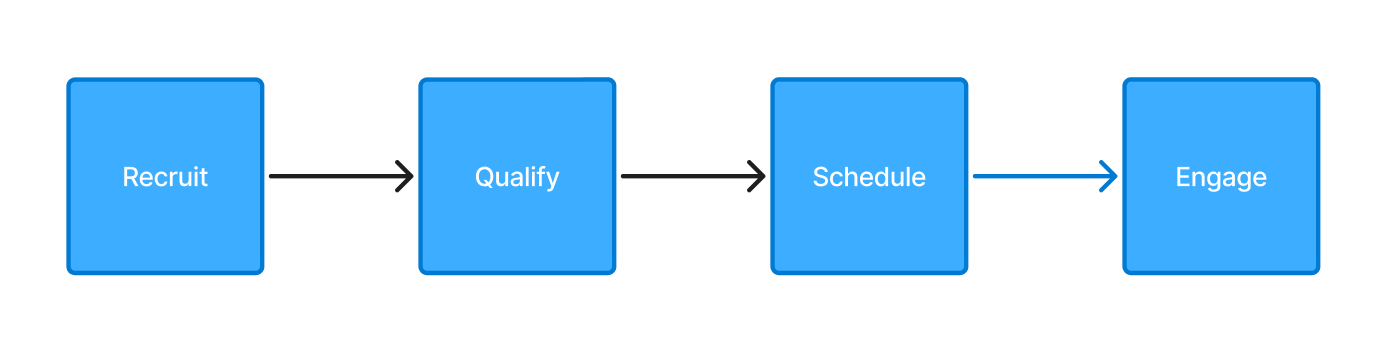

Consistently, I’ve found the point of greatest friction when user testing comes from the time and effort required to identify, recruit, and schedule users.

In my experience, recruitment is often done ad hoc, usually through personal connections. And usually done by someone in a supporting function with a small measure of availability. Or done by the designer or researcher themselves. The challenge with this solution is that it potentially skews user insights towards a small group of pre-conditioned users and takes time away from someone’s primary function. Unfortunately, if this effort isn’t planned in advance, it gets skipped (If this is you, read Tip #1 again).

Consider investing the time in forming a user feedback panel. This usually involves engaging your user base, inviting them to contribute (usually with some form of small reward or compensation). These users opt in and complete a short form to qualify them, and then go into a queue where they are available for research.

Keep a qualified cohort of users handy for testing, so there’s no excuse!

Having qualified users ready removes the biggest source of friction (and excuses) to engaging users throughout the design process.

User research requires resources and intentionality. But done in the right amounts at the right time, it delivers a huge return on that investment.

Let’s do better business by being more human-centered.

Mike-

ps. We’re looking to pilot a managed service supporting internal teams by setting up and managing user reference panels. Reach out for more info.